28 March 2021

How to auto-paginate a DynamoDB Scan using an async generator

The process for paginating queries with DynamoDB is relatively straightforward, but rather tedious:

A single Scan only returns a result set that fits within the 1 MB size limit. To determine whether there are more results and to retrieve them one page at a time, applications should do the following:

- Examine the low-level Scan result: if the result contains a LastEvaluatedKey element, proceed to step 2, otherwise there are no more items to be retrieved.

- Construct a new Scan request, with the same parameters as the previous one. However, this time, take the LastEvaluatedKey value from step 1 and use it as the ExclusiveStartKey parameter in the new Scan request.

- Run the new Scan request.

- Go to step 1.

The idea:

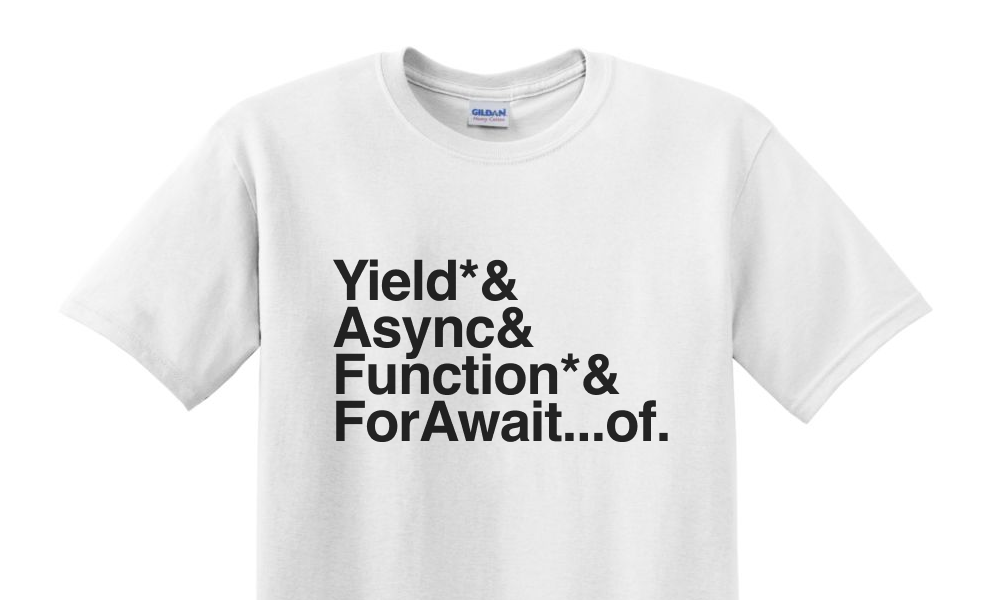

In JavaScript (or Typescript) we can abstract this process and create an elegant interface for iterating over the results by using an async generator.

A generator uses lazy evaluation so that it returns results as they are requested. Consequently, it can make a query and return those results for our code to work on without having to query everything else first. It returns results from its iterator with a yield expression. (mdn docs)

Since each page has multiple items, it’s more elegant to have our generator yield each item independently. That can be achieved using a yield* expression! (mdn docs)

In order to make asynchronous calls inside the generator, we have to turn the generator into an asynchronous function using the async keyword. Now we can very straightforwardly process the API requests as Promises. (mdn docs)

Combining all these, we can create an auto-paginating Scan as such…

The code:

Now, inside an async function, we can simply iterate over the results of the generator using a for await…of statement! (mdn docs)

The example:

Querying multiple pages of results is no more difficult than iterating over an array! If we want to throttle or cut things short at any point, we can sleep in the for-loop or break out of it.

You will need to be inside an async function to operate on the async generator or be in a repl that supports top-level async/await. Also, mind your read capacity quotas, DynamoDB isn’t made for regular full scans, there are better tools out there if you’re processing truly large amounts of data.

Don’t repeat yourself: // DRY

I packaged this idea up a while back in a repo and an npm package: